Ethics and regulation of artificial intelligence: How investors can navigate the maze

Danai Jitawattana

By Saskia Kurt Czech and Jonathan Berkow

From moral hazard to brand damage to regulatory uncertainty, AI poses challenges for investors. But there is a way forward.

Artificial intelligence (AI) raises many ethical issues that can translate into risks for consumers and businesses And investors. AI regulation, which is evolving unevenly across multiple jurisdictions, also adds to uncertainty. In our view, the key for investors is to focus on transparency and explainability.

The ethical issues and risks of AI start with the developers who created the technology. From there, it flows to developer customers — companies that integrate AI into their businesses — and then to consumers and society more broadly. Through their holdings in AI developers and companies using AI, investors are exposed to both ends of the risk spectrum.

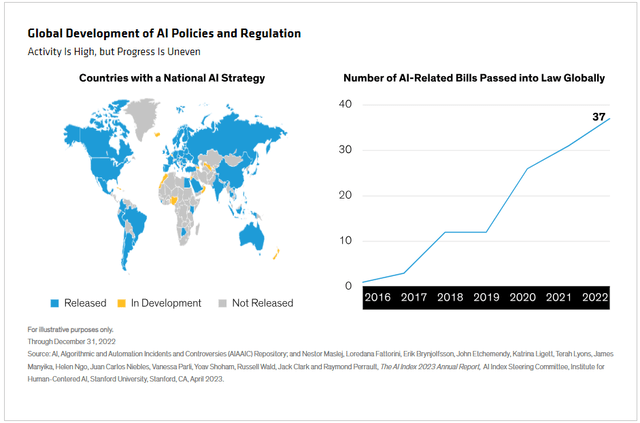

Artificial intelligence is developing rapidly, far ahead Most people understand it. Global regulators and legislators are among those trying to catch up. At first glance, their AI activity has grown rapidly in the past few years; Many countries have issued relevant strategies and others are about to introduce them (an offer).

In reality, progress has been uneven and far from complete. There is no uniform approach to regulating AI across jurisdictions, and some countries have introduced their own regulations before ChatGPT launches in late 2022. As AI spreads, many regulators will need to update and perhaps expand the work they have already done.

For investors, regulatory uncertainty exacerbates other risks that AI faces. To understand and evaluate how to address these risks, it is helpful to have an overview of the AI business and the ethical and regulatory landscape.

Data risks can hurt brands

Artificial intelligence includes a set of technologies geared toward performing tasks typically performed by humans and performing them in a human-like manner. AI and business can intersect through generative AI, which involves various forms of content generation, including video, audio, text, and music; and Large Language Models (LLMs), a subset of generative AI that focuses on natural language processing. LLMs serve as foundational models for various AI applications—such as chatbots, automated content creation, and analyzing and summarizing large amounts of information—that companies are increasingly using to engage their customers.

However, as many companies have found, AI innovations may come with potential brand-damaging risks. These can arise from biases inherent in the data on which LLM holders are trained, which has led, for example, to banks inadvertently discriminating against minorities in granting home loan approvals, and to US health insurance providers being sued. Class action lawsuit alleges use of artificial intelligence algorithm caused elderly patients’ extended care claims to be wrongly denied.

Bias and discrimination are just two of the risks targeted by regulators that should be on investors’ radar; Others include intellectual property rights and data privacy considerations. Risk mitigation measures – such as developers testing the performance, accuracy and robustness of AI models, and providing companies with transparency and support in implementing AI solutions – should also be scrutinized.

Dig deeper into AI regulations

The regulatory environment for AI is evolving in different ways and at different speeds across jurisdictions. The latest developments include the EU AI law, which is expected to come into force around mid-2024, and the UK government’s response to the consultation process launched last year with the launch of the government’s AI Regulation. paper.

Both efforts illustrate how regulatory approaches to AI can differ. The UK is adopting a principles-based framework that existing regulators can apply to AI issues within their own fields. In contrast, EU law offers a comprehensive legal framework that includes risk-graded compliance obligations for developers, companies, importers and distributors of AI systems.

In our view, investors should do more than just delve into the details of each jurisdiction’s AI regulations. They should also learn about how jurisdictions manage AI issues using laws that precede and lie outside of AI regulations – for example, copyright law to address data breaches and employment legislation in cases where AI has an impact on labor markets.

Fundamental analysis and engagement are key

A good rule of thumb for investors trying to assess AI risks is that companies that proactively make full disclosure of their AI strategies and policies are likely to be well prepared for new regulations. More generally, fundamental analysis and issuer engagement – the fundamentals of responsible investing – are crucial to this area of research.

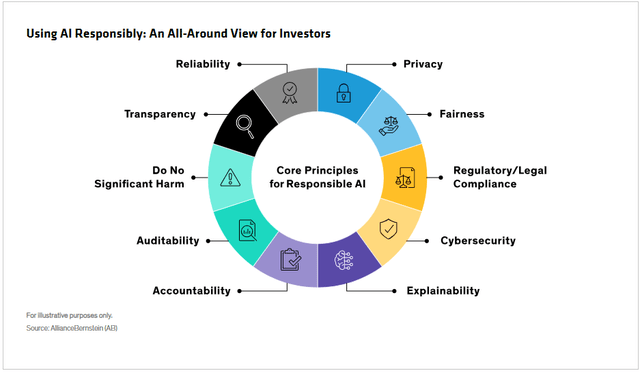

Fundamental analysis should delve not only into AI risk factors at the company level but also along the business chain and across the regulatory environment, testing insights against the core principles of responsible AI (an offer).

Engagement conversations can be structured to cover AI issues not only in terms of their impact on business operations, but from an environmental, social and governance perspective as well. Questions investors should ask boards and management include:

-

Artificial intelligence integration: How has the company integrated AI into its overall business strategy? What are some specific examples of AI applications within a company?

-

Council supervision and experience: How does the board ensure that it has sufficient expertise to effectively oversee and implement the company’s AI strategy? Are there any specific training programs or initiatives?

-

Public commitment to responsible AI: Has the company published a formal policy or framework on responsible AI? How does this policy align with industry standards, AI ethical considerations, and AI regulation?

-

Proactive transparency: Does the company have any proactive transparency measures in place to withstand future regulatory impacts?

-

Risk management and accountability: What risk management processes does the company have in place to identify and mitigate risks associated with AI? Is there a delegated responsibility to oversee these risks?

-

Data challenges in LLMs: How does the company address privacy and copyright challenges associated with the input data used to train large language models? What measures are taken to ensure that input data complies with privacy regulations and copyright laws, and how does the company address restrictions or requirements related to input data?

-

Challenging bias and fairness in generative artificial intelligence systems: What steps is the company taking to prevent and/or mitigate biased or unfair results from its AI systems? How does the company ensure that the outputs of any generative AI systems used are fair, unbiased, and do not perpetuate discrimination or harm to any individual or group?

-

Incident tracking and reporting: How does the company track and report incidents related to its development or use of artificial intelligence, and what mechanisms are in place to address and learn from these incidents?

-

Metrics and reporting: What metrics does the company use to measure the performance and impact of its AI systems, and how are these metrics reported to external stakeholders? How does the company maintain due diligence in monitoring regulatory compliance for its AI applications?

Ultimately, the best way for investors to find their way through the maze is to remain consistent and skeptical. Artificial Intelligence is a complex and fast-moving technology. Investors should insist on clear answers and not be unduly influenced by detailed or complex explanations.

The authors would like to thank Roxanne Lu, ESG Analyst in AB’s Responsible Investment Team, for her research contributions.

The opinions expressed here do not constitute research, investment advice or trading recommendations and do not necessarily represent the opinions of all of AB’s portfolio management teams. Opinions are subject to review over time.

Original post

Editor’s note: The summary points for this article were selected by Seeking Alpha editors.